SIGGRAPH 2018 Mini-Recap

I attended SIGGRAPH 2018 for the third time as it makes its third appearance up the street at the Vancouver Convention Center. My attendance was more of a one-foot-in type of visit this year but I tried to absorb what I could. Here’s my mini-recap:

A little bit on SIGGRAPH — Special Interest Group on Computer GRAPHics and Interactive Techniques is an annual conference centered around, you guessed it computer graphics.

The Emerging Technologies section of a conference was a great place to start, showcasing research and work in the realm of immersive technologies that surround us.

VR feedback was a focal point, featuring projects that explored ideas beyond pulsing a motor for vibration.

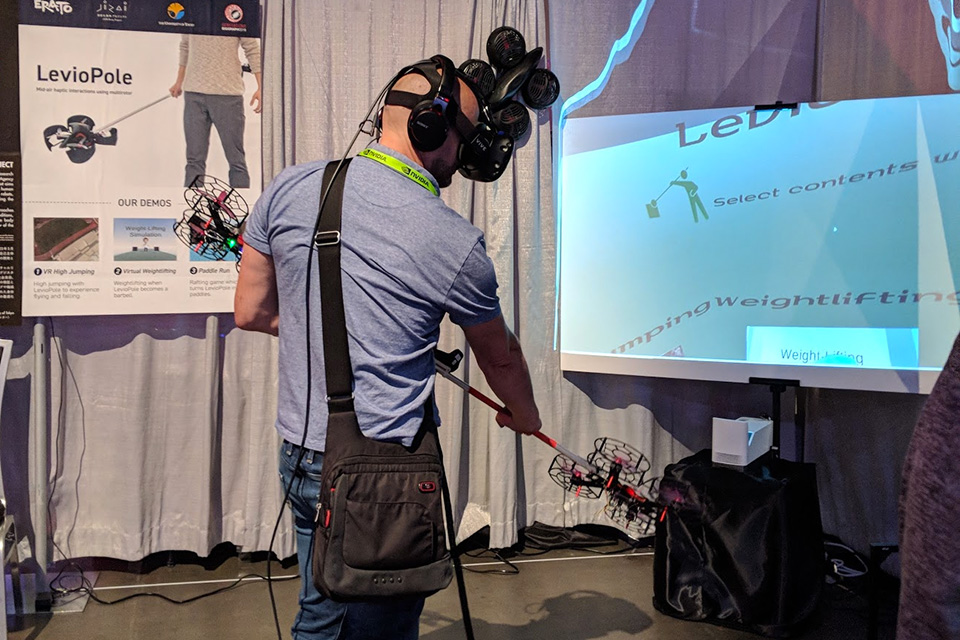

First, a University of Tokyo project titled LevioPole, which is a rod you can wield that will provide resistance via what looks like quadcopters at each end. It was paired with a flight and weight-lifting demo.

KAIST U members from South Korea also experimented with air in their Wind-Blaster project. Using a pair of high-powered fans, directional force-feedback could be achieved similar to the recoil of firing a weapon.

Takeaway: drone-tech pushed nice airflow gains, but be careful not to blow your flyers off.

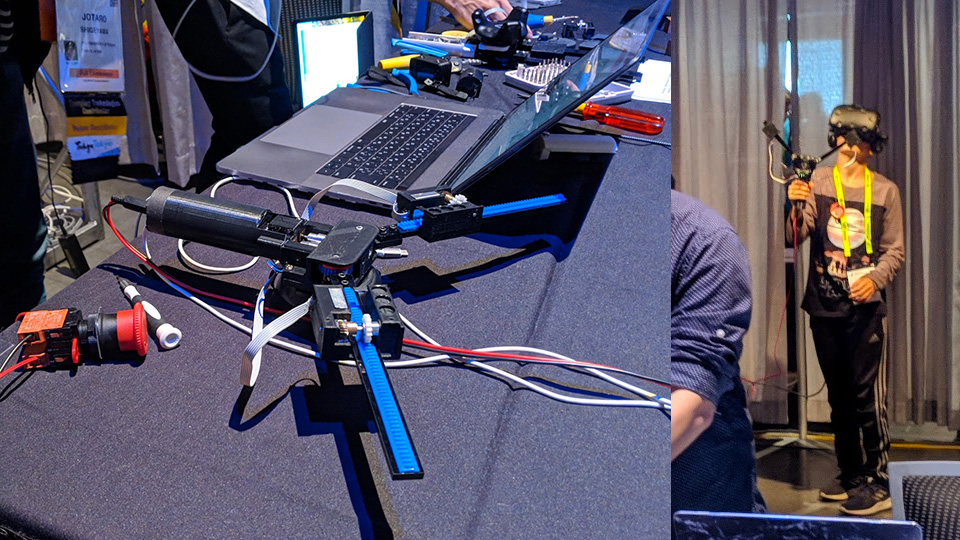

Transcalibur, another U of Tokyo project demonstrates how manipulating the center of gravity in turn can change the feel of the object, improving the immersive experience of wielding objects.

Takeaway: careful simulation of weight and feel can help with shape perception.

Collaboration now spans across deep undersea fiber cables. UTokyo (again) plus Keio U have fused together to produce Fusion, a tele-collab system. It’s a backpack with an extra set of arms that can be controlled by another participant. Imagine an auto-mechanic remote controlling this to swap a part for ya 🙂

Takeaway: an extra pair of hands is super handy, but how is the latency.

Instead of the cameras peering over the shoulder, perhaps Takayuki Todo’s SEER (Simulative Emotional Expression Robot) might be an alternate pairing for a two-headed ogre approach. By default, SEER mimics the facial expressions of the participant, especially with the lifelike-ness of its eyes.

Takeaway: for future robots; though this shows how much depth can be created with so little actuations.

Moving to the curated exhibits in the Art Gallery, You are the Ocean caught my attention. By Northwestern University, the weather and water in this demo are generated by the participant’s brainwaves. Concentrate and the seas become rough, relax and it calms.

Takeaway: to me this project is a reflection of the mental health issues we see today, and may even help train taking control of emotions / state-of-mind.

Or one could escape from it all. The folks at Tangible Interaction put out Haven, which looked like a croissant in powdered sugar at first glance. But once inside, it comes as advertised — a tranquil, shielded place to rest, recover and restart your day.

Takeaway: not all senses need to be bombarded.

Onto the exhibit floor, where Google was trying to demo a collaborative AR experience — multiple participants can either manipulate the environment, or steer a camera for non-participants. It was running into network issues while I was present.

Takeaway: enterprise VR / AR will push network requirements ~ is it going to spearhead 5G, probably not.